6 How to read CSV file in PySpark | PySpark Tutorial

6. PySpark Interview Task | Deloitte, KPMG, Accenture, PwC, Deutsche Bank Data Engineer PreparationПодробнее

pyspark tutorialПодробнее

Databricks Quick Tips: Skip Records when reading CSV Files in PySparkПодробнее

17. azure databricks interview questions | #databricks #pyspark #interview #azure #azuredatabricksПодробнее

PySpark! Reading CSV files into a DataFrameПодробнее

PySpark Full Course | Basic to Advanced Optimization with Spark UI PySpark Training | Spark TutorialПодробнее

Reading a CSV file using Pyspark in Jupyter NotebookПодробнее

PySpark Tutorial | Full Course (From Zero to Pro!)Подробнее

3. PySpark I Different Ways to Read CSV File I Hands on Tutorial IПодробнее

46. Date functions in PySpark | add_months(), date_add(), date_sub() functions #pspark #sparkПодробнее

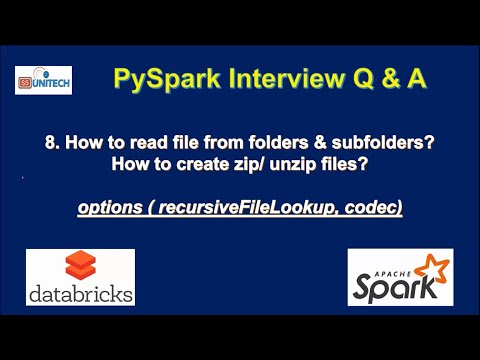

8. how to read files from subfolders in pyspark | how to create zip file in pysparkПодробнее

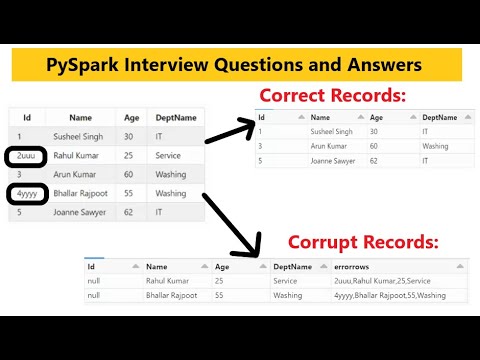

11. How to handle corrupt records in pyspark | How to load Bad Data in error file pyspark | #pysparkПодробнее

42. Greatest vs Max functions in pyspark | PySpark tutorial for beginners | #pyspark | #databricksПодробнее

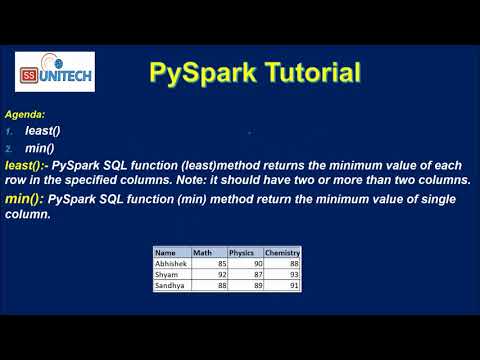

43. least in pyspark | min in pyspark | PySpark tutorial | #pyspark | #databricks | #ssunitechПодробнее

44. Date functions in PySpark | current_date(), to_date(), date_format() functions #pspark #sparkПодробнее

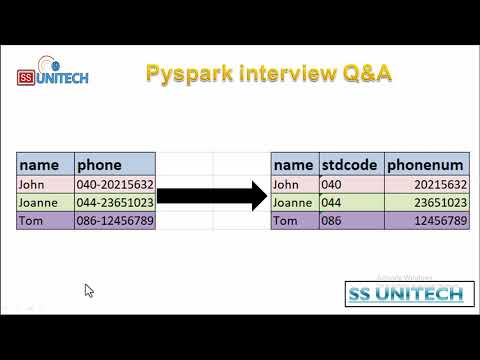

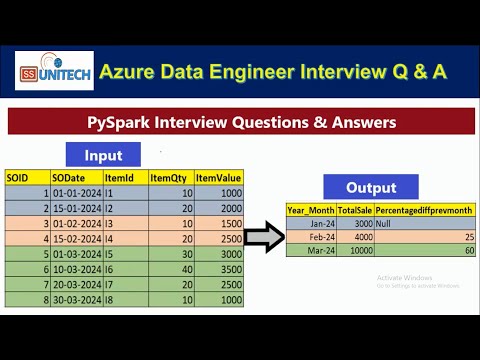

13. Pepsico pyspark interview question and answer | azure data engineer interview Q & A | databricksПодробнее

9. delimiter in pyspark | linesep in pyspark | inferSchema in pyspark | pyspark interview q & aПодробнее

10. How to load only correct records in pyspark | How to Handle Bad Data in pyspark #pysparkПодробнее

12. how partition works internally in PySpark | partition by pyspark interview q & a | #pysparkПодробнее

15. deloitte azure databricks interview questions | #databricks #pyspark #interview #azureПодробнее